This post picks up from where I left off here. I had decided to do a bit of experimenting with generative AI but didn’t really know where to start. I spoke to somebody that I know has used Midjourney but they made it sound very complicated and I was, frankly baffled by the idea that you had to use it via Discord. Inevitably this led to a lot of googling through which I discovered that I could get some limited free access to Dall-E via my Microsoft account. This seemed like a sensible place to start.

Parallel to this I was also in the early stages of thinking about making work about the food delivery riders that currently proliferate in large UK cities like Glasgow. My work often addresses something that could be called ‘the life of the city’ and I have been interested for a while in the ubiquitous, orange-jacketed workers that gather outside food joints and hammer along the Glasgow pavements on their de-facto motorcycles. These workers seem to me to occupy a number of interstitial spaces; they are neither seen or invisible, they are neither employed or autonomous, they are simultaneously indispensable and yet seemingly, deprecated, devalued, and often dehumanised. In this early, unformed thinking I was sketching out some thoughts about categorization and agency, and the ways in which contemporary capitalism creates, leverages, and exploits these ontological disruptions.

With wild naivety I chucked myself into my usual MOW. I strapped on the Go-Pro, picked up my camera, and headed to McDonalds. In fairly short-order I realised what a bad idea this was. I never make a secret of the fact that I am filming when I am in public and always try to respond sensitively to any indication of unhappiness or resistance. This, however, was on another level. I have filmed and photographed openly in public for long enough to know that most people are utterly oblivious. I had barely set foot on the outside eating area of McDonalds before I was confronting seriously bad vibes. I legged it.

This led to a re-think. In many ways this reinforced my interest. The issue now was how to approach this. Perhaps AI might be the answer. If I couldn’t easily collect images of Just Eat riders then perhaps, I could generate them using Dall-E. I looked through the film that I had made and there were some images that I could have used but not very many. I had learned enough about AI at this point to understand that I needed to write prompts, so I decided to use these pictures to help guide that process.

My first attempt at a prompt was based on the above picture; ‘photo of a sad Deliveroo employee looking at his mobile phone. He is stood in front of a drive-through fast food restaurant. It is sunny and bright’. Here are some of the initial outputs:

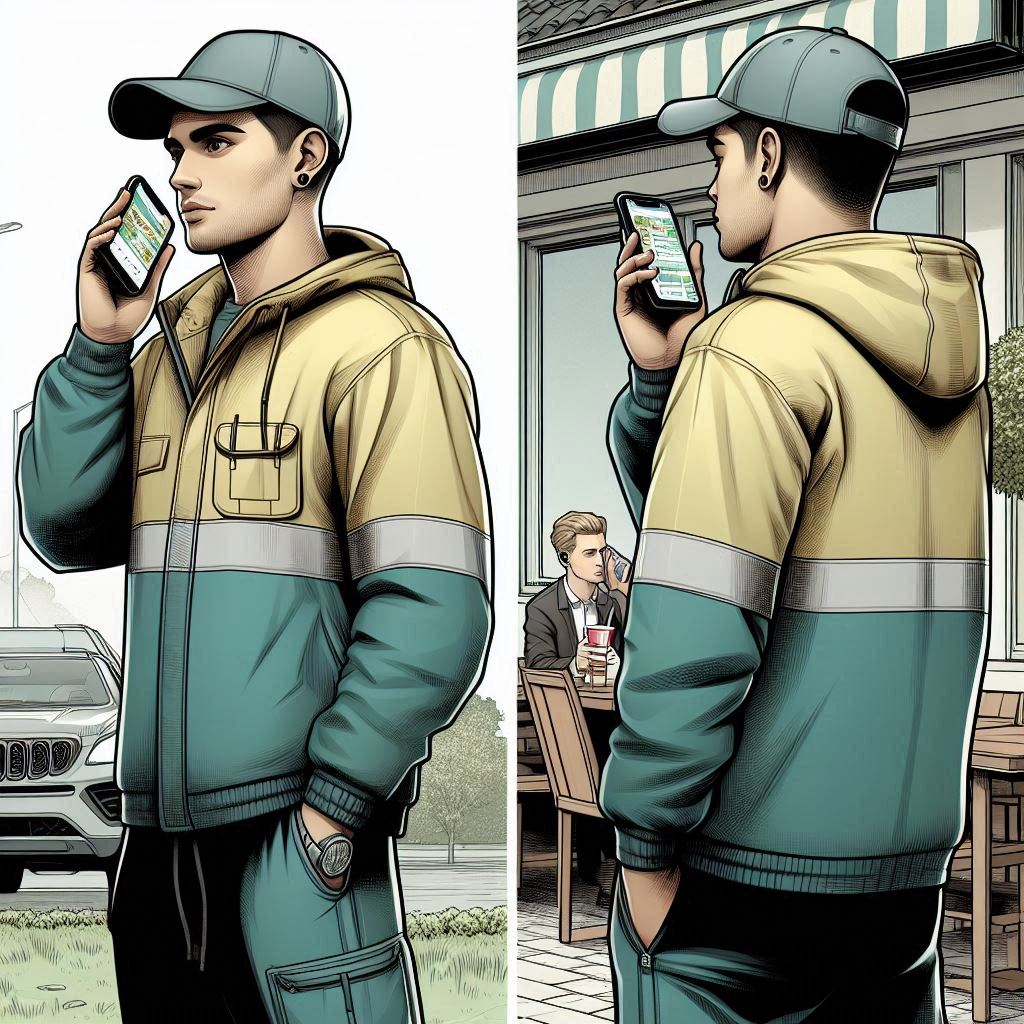

I was (naively) quite surprised by a number of things; how different the outputs were every time I ran the same prompt, how ‘stylised’ they were, how different they all were from the image that I started with and, just how odd they were. I wasn’t, however, discouraged. It was clear that writing prompts was more complicated than I had realised. I started to research prompting. I had by now, managed to get Midjourney working so I started inputting the prompts into both AIs to compare the results. Midjourney has some tools that are pretty useful for learning about how prompting works including the option of getting the AI to write prompts for pre-existing images. Midjourney also has the facility to upload visual prompts to guide the output (Dall-E does to but not on the free tier that I was still using at this point). I uploaded the original image to Midjourney and continued to refine my prompt. The outputs were getting closer to the original image but were still a long way away from something that I felt I could use:

This was also becoming surprisingly labour-intensive. You can generate a lot of outputs, very quickly (credit permitting) and it quickly became apparent that it is a slippery process; as you get closer to one aspect of what you are looking for, other aspects can often be moving further away. I was fascinated and needed to understand what was happening. I didn’t abandon the delivery rider idea at this point but I decided that I needed to pivot my primary attention to learning about how generative AI works.

Leave a comment