As I write my Study Statement, I am becoming increasingly aware of the need to set some boundaries on my research. Jonathan made the point yesterday that this is not a huge piece of writing, saying ‘you are artists’ and this was a helpful calibration. I am not trying to write a PhD; this is a focused piece of practice-driven research written from the position of an artist. I guess that one of the things researchers have to make their peace with, is the constant feeling that there is always just one more source to be located and that if you don’t find it your research will be completely invalidated.

This has led me to reflect on what kind of knowledge is actually necessary for the work I am doing/ might do in the months ahead. My interest in AI is driven by the ontological challenges that I perceive in the current headlong rush to embrace deeply flawed and over-hyped technologies. As such, I need to know how AIs work but not at exhaustive levels of technical detail. I need to know enough about AI to feel able to articulate and defend my conclusions/ outputs. I do not need to get lost down rabbit-holes of AI engineering. Knowing where to stop is as important as knowing where to begin.

I am thinking about this in the light of something I have learned this morning about the word ‘ontology’. I have been using the term in a relatively loose, philosophical sense; what exists, how is reality structured, how do categories shape experience. This sort of usage is common in art theory and critical writing and leans toward a view of existence in which instability, uncertainty and multiplicity are constantly navigated and negotiated.

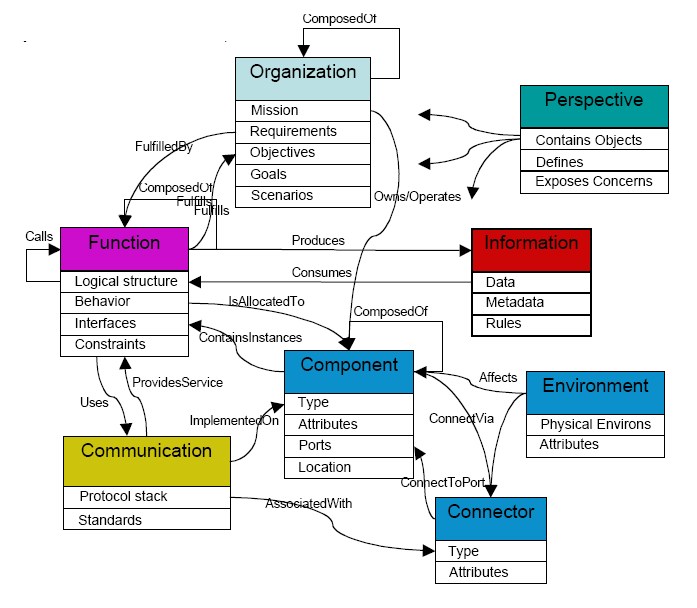

However, in AI terms, ‘ontology’ has a very specific and practical meaning. It refers to the system of categories, attributes, and relationships that are built into the system architecture. There is nothing philosophical or speculative about this, it is a framework of ‘facts’ that shape the ways in which the AIs join up the data points in their vast data sets. These ontologies impose order on data and are therefore by their nature limiting and controlling.

There seems to me to be an interesting dichotomy in the two usages. In philosophical terms, ontology can embrace ambiguities and contradictions. In AI engineering, ontology seeks to eliminate these possibilities. AI ontology is necessarily reductive as its purpose is to control uncertainty and facilitate the efficient functioning of the system.

This has given me cause to think about where there might be other slippage in the space between what we might call philosophical and technical language. Terms like intelligence, learning, and understanding are used freely in both spheres but with radically different meanings. AI engineers use these terms to narrow things down, to create certainties that allow the system to work. In philosophical/ critical spaces these terms are often be used to do the opposite, questioning and destabilising understandings and beliefs.

I guess this also explains where it is that Musk gets the engineers to meddle whenever he wants to change Grok’s view of anything.

Learning this today (not the Musk bit) has helped me to think about the framing of my research. I don’t want to over-invest in the mechanics of AI. What I want to think about more, is the use of language in the framing of the AI narrative and what I think that might be serving to obscure. I don’t want to make work that is simply about how AI works (or doesn’t) but rather asks questions about the wider AI discourse. I also need to not get sucked into a game of bibliography Pokémon (gotta catch ‘em all!) and then get overwhelmed by the volume of information that I have collected. This is about creating a critical framework in which the work can be produced and evaluated.

Leave a comment